Ethical AI is the concept of technical morality that is evolving. We need to consider the power of AI & implement values to preserve the integrity of humankind.

In a world where machines can think, answer questions, and even make decisions, we must ask a tough question: how far is too far? Artificial intelligence, once just a dream, is now shaping our everyday lives. But with great power comes a need for great responsibility, and thus, Ethical AI becomes necessary. It is the field of Responsible AI that defines ‘Where do we draw the line’, in order to limit and control this overwhelming power.

At Wokegenics, we build smarter tech that serves people, not the other way around. So, let’s break it down: what is ethical AI, why it matters, and how we can make sure machines never cross the line.

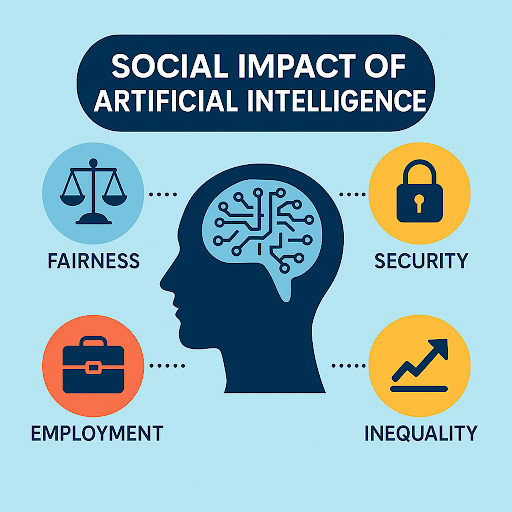

Ethical AI means creating machines that help people while staying within human values. It is about doing things the right way, not just the fast or smart way. The goal is not just better performance, but fairness, safety, unbiasedness, and trust.

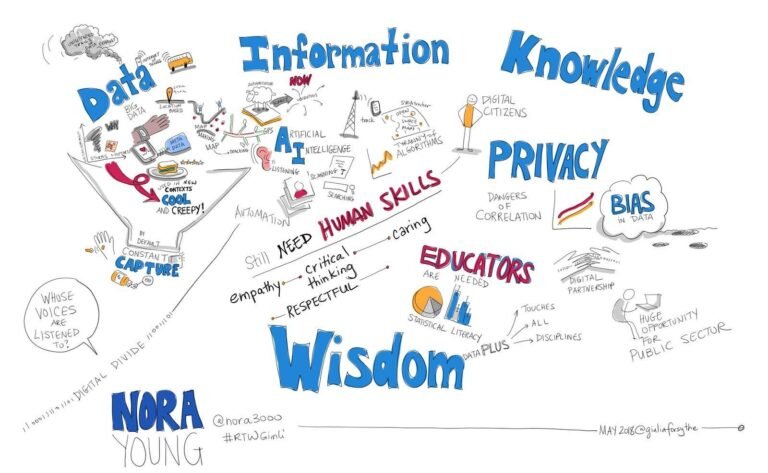

We achieve ethical AI by making sure machines do not harm, discriminate, or lie. It starts with the people who build it. Developers need to think deeply about the tools they create. Are they safe? Are they fair to everyone? Do they respect privacy?

Creating such systems involves careful design. Every line of code should follow rules that put people first. It is not just a tech job, it is a moral one. This is why companies like Wokegenics believe tech should always stay human at heart. That means no shortcuts when it comes to ethics.

AI is great when used for the right things, speeding up work, giving better healthcare advice, improving education, and making daily life easier. But there are places where AI should not go.

This is where the line must be drawn. When machines begin to affect someone’s future or safety, a human must always be in the loop. Blind trust in systems can be dangerous, especially when there is no one to answer for their actions. It is not just about “can we do it?” but also, “should we?” That is the real question.

Building ethical AI is not just a job for tech teams. It is a team effort between the government, companies, and even users like you and me.

Governments must step in with strong, clear laws. These rules should protect people’s privacy, ensure fairness, and make companies answerable when things go wrong. Just like we have food safety checks, we need regular audits for AI systems. Digital Personal Data Protection Act,2023 (22 of 2023), is one of such Acts that needs to be made more stringent in line with Ethical AI.

Companies, on the other hand, must take the lead before the law even knocks. It is time to set up internal ethics panels. Test systems for bias. Be open about how decisions are made. No black boxes, systems should be explainable just like how EU’s AI Act’s required for explainability and risk assessment. No secret formulas. At Wokegenics, we follow these values every day. We believe in full transparency, privacy-first tools, and building only what helps, not harms.

Individuals must stay alert. Read the terms. Ask questions. Choose tools from brands that put your well-being first. Use tech, but do not let it use you.

We are standing at the start of something big. AI is changing the world. But how it changes us depends on the choices we make today. The line between helpful and harmful is thin. But if we stay grounded in human values, we can build a future where machines make life better, not worse.

Wokegenics is working on tools that solve real problems, not create new ones. Whether it is a smart city system, a healthcare app, or a chatbot, our aim stays the same: people first, always. So, where do we go from here? We keep asking questions. We keep learning. And most of all, we stay human in how we build, use, and trust technology.

If you are building a product, launching a tool, or just thinking about using AI in your service, pause for a moment. Ask yourself not just how it works, but why it exists. At Wokegenics, we are ready to help you create solutions that are not just smart, but right. Let’s make something better, together.

References:

https://www.unesco.org/en/artificial-intelligence/recommendation-ethics

https://www.brookings.edu/articles/the-ethics-of-artificial-intelligence/

https://www.ibm.com/think/topics/ai-ethics

https://www.unesco.org/en/artificial-intelligence/recommendation-ethics

https://artificialintelligenceact.eu/

https://prsindia.org/billtrack/the-digital-personal-data-protection-bill-2023